A designer spends three days perfecting a dashboard layout. Every card is aligned. The spacing is intentional. The colour tokens are consistent.

Then a developer screenshots the Figma file, pastes it into an AI coding agent, and gets back a wall of hardcoded hex values, absolute positioning, and div soup.

Sound familiar?

The gap between what a designer means and what an AI agent interprets has been one of the biggest friction points in modern product development. But we've found a way to close it, and it doesn't require new tools or bigger budgets. It requires better Figma hygiene and a structured approach to the Figma MCP server.

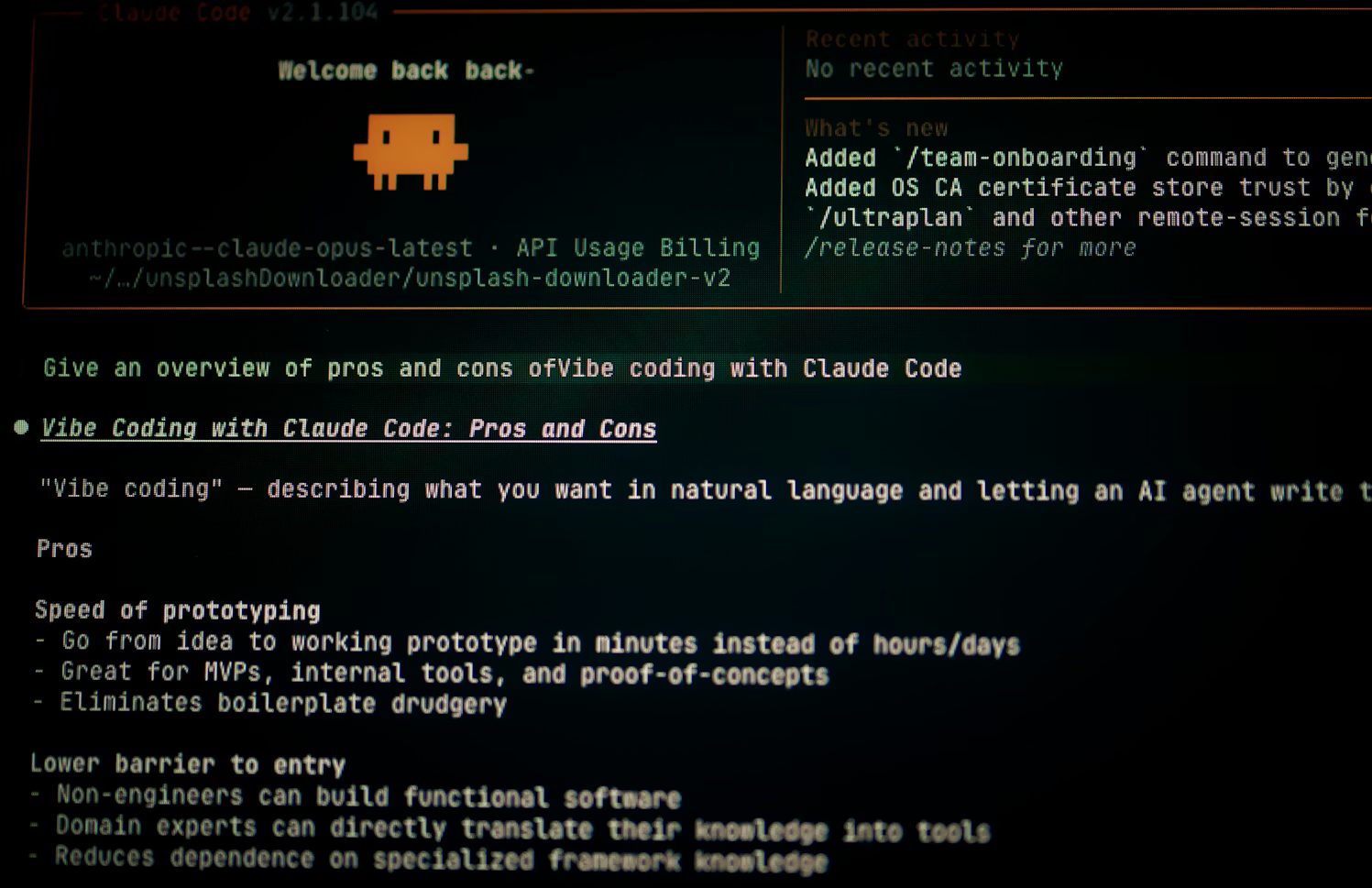

At PixelBeard, we've been running our design-to-code workflow through Figma's MCP server with agentic coding tools like Claude Code, Cursor, and GitHub Copilot. The results have been significant: fewer manual corrections, tighter design fidelity, and a development cycle that actually respects the designer's intent.

Here's exactly how we do it, and why your Figma layer names matter more than you think.

What the Figma MCP Server Actually Changes for Design-to-Code

MCP (Model Context Protocol) is an open standard that lets AI agents pull structured data from external tools. Figma's MCP server, which launched in beta in June 2025 and has been evolving rapidly since, exposes your design files as rich, queryable context rather than flat images.

That distinction is everything.

When a coding agent connects to Figma via MCP, it doesn't just see pixels. It reads your component hierarchy, your auto-layout rules, your variables, your spacing tokens, and yes, your layer names. All of that feeds directly into the code it generates. The agent calls structured layout data, design tokens and visual verification, all in a single prompt.

The old designer-to-developer handoff looked like this: designer exports specs, developer interprets them, AI guesses at the rest. The designer-to-agent handoff cuts out the guesswork entirely. The agent reads the design file the way a senior developer would read a well-documented codebase.

But here's the catch. That only works if the design file is worth reading.

Why Semantic Figma Layer Naming Is the Highest-Leverage Fix

Let's be blunt. If your Figma file is full of layers named "Group 12," "Frame 47," and "Rectangle 203," you're handing an AI agent a book with no chapter titles, no table of contents, and half the pages stuck together.

Layer names are metadata. When the Figma MCP server transmits your design structure to a coding agent, those names show up directly in the generated output. A layer called "hero-section-cta" tells the agent exactly what it's building. A layer called "Group 5" tells it nothing, and the agent fills in the blanks with assumptions that almost always need correcting.

We've seen this play out dozens of times internally. The same design, structured two different ways, produces wildly different code quality from the same AI model. Figma's own developer documentation makes the same point: replace default names like "Frame1268" with intent-driven ones like "CardContainer" or "CTA_Button" so the model understands both the element's role and its expected functionality.

Here's what we enforce across every PixelBeard project:

- Semantic naming that mirrors code conventions. "pricing-card-container," "nav-menu-dropdown," "user-avatar-wrapper." The agent reads these and generates components that map cleanly to your frontend architecture.

- Descriptive hierarchy, not visual hierarchy. Don't name layers based on what they look like ("big-text," "blue-box"). Name them based on what they do ("section-heading," "feature-highlight"). AI models respond to functional context far better than visual description.

- No default names. Ever. If Figma auto-generated the name, it needs replacing before handoff. Figma AI's bulk rename feature can batch-process this in seconds using a layer's contents, location, and relationship to surrounding elements. The Rename It plugin handles anything it misses.

This isn't busywork. It's the single highest-leverage thing a designer can do to improve AI code generation quality.

Why Figma Auto-Layout Is Non-Negotiable for MCP Workflows

Auto-layout has always been good practice for responsive design. With Figma MCP in the picture, it's become a hard requirement.

Here's why. When the MCP server reads a frame, it translates auto-layout properties directly into layout logic: flex direction, gap values, padding, alignment, and wrap behaviour. An agent receiving this data can generate a responsive component on the first pass, with real spacing tokens instead of magic numbers.

Without auto-layout? The agent sees a static frame with absolute coordinates. It produces rigid code that breaks the moment a screen size changes. Your developer then spends an hour refactoring something that should have been right from the start.

We treat auto-layout as a structural language, not just a design convenience. Every frame that ships through our MCP pipeline uses it. Every component accounts for how it should behave at different breakpoints. The design file becomes the responsive specification, and the AI reads it natively.

Figma's Dev Mode already lets engineers preview how auto-layout responds at different widths. The MCP server takes that further by encoding those rules directly into the generated code. The designer's responsive intent arrives in the codebase intact, no interpretation required.

Design Tokens and Figma Variables: The Difference Between Fragile and Scalable

Here's a pattern we see constantly in client projects that come to us mid-build. Colours are defined as raw hex values. Spacing is hardcoded to pixel amounts. Typography sizes are set manually on every text layer.

The result? An AI agent generates code with “#3B82F6” instead of var(--color-primary). It uses padding: 24px instead of referencing a spacing token. Every value is brittle, disconnected, and impossible to maintain at scale.

Figma variables fix this at the source. When you map colours, spacing, border radii, and typography to variables, the MCP server passes those token names and values to the agent via the get_variable_defs tool. The generated code references your design system, not arbitrary values.

This is where the real compounding benefit kicks in. Set up your variables once, and every MCP-powered handoff inherits them automatically. Change a token value in Figma, regenerate the code, and the update propagates cleanly. No find-and-replace across fifty files. No missed instances of the old blue.

For React projects, you can take this a step further with Code Connect, which maps Figma components directly to their code equivalents in your codebase. The agent doesn't just reference your tokens; it imports your actual components with the correct props and import paths. It's not available for every framework yet, but for supported stacks it's a significant upgrade.

Annotations: Giving AI Agents the Context a Screenshot Never Could

One of the most underused features in Figma for MCP-driven code generation is annotations.

Designers often rely on imagery to represent interactive elements. A static rectangle might stand in for an embedded map. A placeholder card might represent dynamic content pulled from an API. A button might have hover states, loading states, and error states that exist only in the designer's head.

Annotations make that invisible context visible to the agent. You can specify accessibility roles ("role: button," "alt: User profile photo"), interaction behaviour ("Navigate to /settings on click"), data expectations ("Displays user's first name from session"), or even CSS hints ("Change the color to bg-primaryButtonHover on hover").

The MCP server includes annotation data in the context it sends to AI agents. Suddenly, the generated code doesn't just look right; it behaves right. A card annotated with interaction details arrives with click handlers already wired. An image tagged with alt text generates accessible markup by default.

The Full Figma MCP Design-to-Code Workflow in Practice

Here's how a typical PixelBeard MCP handoff looks from start to finish:

- Design phase. Designers build in Figma using components from our shared library, auto-layout on every frame, variables for all tokens, and semantic layer names throughout. Annotations are added for interaction logic, accessibility, and dynamic content.

- Connection. The developer opens their agentic coding tool (Claude Code, Cursor, Copilot in VS Code, or Codex) with the Figma MCP server configured, with their local desktop server. They paste the Figma frame URL into the prompt.

- Generation. The agent calls get_design_context and receives the full structural breakdown: component hierarchy, layout rules, token references, annotations, and a screenshot for visual verification. Code is generated using the project's framework and existing component library.

- Review. The developer reviews the output, makes targeted refinements (edge cases, complex state logic, API integration), and pushes. The first pass is typically 80-90% production-ready, compared to maybe 40-50% from a screenshot-based approach.

- Iteration. If the design updates, the developer re-runs the MCP query. Because the design file is the source of truth, changes propagate cleanly without full rewrites.

The whole loop takes a fraction of the time it used to. Design intent stays intact. Developers focus on logic and architecture instead of pixel-pushing.

The Bidirectional Workflow: Code Back to Figma

One of the most exciting developments from early 2026 is that the Figma MCP server now works in both directions. Agents can write directly to Figma files, not just read from them.

This changes the dynamic entirely. A developer prototyping in Claude Code can send a live UI back to Figma as editable design layers using the generate_figma_design tool. The designer can then refine it on the canvas, and the developer pulls those updates back into code with full design context intact.

We've started experimenting with this at PixelBeard for rapid prototyping sprints. A developer builds a rough functional prototype, pushes it to Figma, and the designer polishes the visual language using the team's existing components and variables. The next code generation pass incorporates those refinements automatically. No more separate "design version" and "dev version" drifting apart.

It's early days for this capability, and there are rough edges. But the direction is clear: the design file and the codebase are becoming a single, continuous loop rather than two separate artefacts connected by a handoff. Whether your team calls this vibe coding, agentic development, or just a better way of working, the shift is the same. The designer's file isn't a reference document anymore. It's a live input that shapes what gets built, and what gets built shapes it right back.

Figma MCP Skills: Teaching Agents How Your Team Works

Figma recently introduced Skills, reusable instruction sets that teach AI agents your team's specific conventions and workflows. A Skill might define how your team names layers, which components to reach for, what spacing conventions to follow, or how to structure a particular type of screen.

This is particularly relevant for agencies like us at PixelBeard that work across multiple client projects with different design systems. Instead of re-prompting the agent with context every time, a Skill encodes those rules once. The agent then applies them consistently across every handoff for that project.

The Figma Community already hosts a growing library of shared Skills, and you can write custom ones tailored to your team's workflow. Combined with well-structured design files, Skills are what push the MCP workflow from "useful shortcut" to "reliable production pipeline."

This Isn't About Replacing Developers

MCP doesn't eliminate the need for developers. It eliminates the boring, repetitive translation work that drains their time and introduces errors.

The code that comes out of an MCP-driven handoff still needs human eyes. Complex state management, API integration, performance optimisation, edge case handling... those require the kind of contextual reasoning that AI agents aren't reliably producing yet.

What the Figma MCP server does eliminate is the three-hour cycle of "that padding is wrong," "that's the wrong shade of blue," "this doesn't resize properly on mobile." The design-to-code translation gets right on the first pass, freeing developers to work on the parts that actually need their expertise.

For our clients, this translates directly into faster delivery and fewer revision cycles. For our team, it means less friction between disciplines and more time spent on the work that matters.

How to Get Started With Figma MCP in 2026

You don't need to overhaul your entire design system overnight. If you're looking to improve your design-to-code workflow with Figma MCP, start here:

- Rename your layers. All of them. Use Figma AI's bulk rename if you need to catch up quickly, then maintain the habit going forward.

- Apply auto-layout to every frame. If it's going through MCP, it needs responsive structure. Include consistent gap, padding, and min/max constraints.

- Set up Figma variables for your core tokens. Colours, spacing, typography, and border radius at minimum. Add code syntax to your variable collections for even cleaner output.

- Annotate interactive elements. Hover states, click behaviour, dynamic content expectations, and accessibility requirements.

- Connect the Figma MCP server to your team's coding agent and run a test handoff. Compare the output to what you'd get from a screenshot. The difference will speak for itself.

The design-to-dev handoff has always been where good ideas go to get diluted. With the Figma MCP server and a bit of file discipline, it becomes the place where they get built exactly as intended.

We've been refining this process across every project at PixelBeard in 2026, and we're seeing it reshape how quickly and accurately we move from concept to production. If your team is still relying on screenshots and Slack threads to bridge the gap between design and development, there's a faster way.

Want to see how AI is changing our wider workflow? Read our posts on AI upskilling for design teams and Bridging design and dev.

Looking at building a smarter design-to-code workflow for your team?

👉Let’s talk